The missing layer in AI-assisted development

We have SBOMs for dependencies and signed commits for authorship. Why don't we have provenance for AI contributions? Introducing WHENCE standard

We have SBOMs for dependencies and signed commits for authorship. Why don't we have provenance for AI contributions?

Here's a thing that's been bothering me.

Every engineering team I talk to is using AI coding tools. Claude Code, Cursor, Codex, whatever is hot next week. The output is impressive. The velocity gains are real. But when I look at how we review that code, we're still pretending it was written like in the olden days.

A pull request lands. The diff shows what changed. The commit message says why. But the thing that actually drove the change — the prompts, the iterations, the back-and-forth with the AI tool — that's gone. The sessions have closed, the context is gone, and the reviewer is left reading output with no insight into intent.

This is a gap, and it's getting wider fast.

CircleCI's 2026 State of Software Delivery report, published in February, puts numbers to it. Across 28 million workflows, average throughput increased 59% year over year — teams are writing more code than ever. But main branch success rates fell to their lowest level in five years, and recovery times are climbing because teams are struggling to debug code they didn't write and can't trace back to its origin. The median team saw feature branch throughput rise 15% while main branch throughput fell 7%. More code is being generated. Less is shipping. The bottleneck has moved from writing to understanding.

We've solved this problem before

When open source dependencies became the backbone of modern software, we built SBOMs — Software Bills of Materials — so you could answer "what's in this build?" When build reproducibility became critical, we got SLSA attestations. When authorship mattered, we got signed commits.

Each of these followed the same pattern: a new input to the software development process became significant enough that teams needed a structured, shareable record of it. AI-assisted development is that next input. But right now, there's no standard way to record which parts of your codebase were AI-assisted, what prompts produced them, or what the AI tool had access to when it generated the code.

What this means in practice

A code reviewer looks at a function. It's clean, it passes tests, it looks reasonable. But was it generated in one shot, or was it the result of fifteen prompt iterations? Did the developer ask the AI to optimise for readability or performance? Did the AI have access to the full codebase or just a single file? These questions change how you review. They change what you trust. And right now, there's no way to answer them.

For engineering leaders, the question is broader: what percentage of our codebase is AI-assisted? Which tools are our teams using? Are we developing institutional knowledge about effective prompting, or is every developer starting from scratch? Without structured records, these questions are unanswerable.

Introducing WHENCE

I've been working on WHENCE — an open standard for recording how AI contributed to a codebase. The name is the question every code reviewer asks: where did this code come from?

WHENCE records prompts, tool metadata, and code context (what files the AI saw, what diff resulted), then links those records to Git commits using Git notes — which means no pollution of commit history.

Traces are shared by default, because the whole point is that reviewers and auditors can see them. Sensitive content is redacted at write time, before it enters any Git object.

The standard is tool-agnostic: a trace from Claude Code, Codex, or Cursor should all be valid WHENCE. And the data format is deliberately separated from the linking mechanism — the spec currently defines a Git binding, but future bindings for GitHub, Bitbucket, or GitLab are architecturally possible without changing the core format.

The reference CLI is git-whence, and it's designed to fit into existing workflows: prompts accumulate in a local queue during development, and you attach them to your final commits after you've rebased and cleaned up your history. CI verifies that traces are present and valid. If a commit was co-authored by an AI tool that self-identifies (like Claude Code's Co-authored-by trailer) but has no WHENCE trace, that's a policy violation your pipeline can catch.

What I'm looking for

WHENCE is at the draft spec stage. The format is defined, the edge cases around rebasing and secret redaction are handled, and the spec has been through multiple rounds of technical review. What it needs now is feedback from the people who'd actually use it — engineering leads thinking about AI governance, DevEx teams building internal tooling, and developers who want their PR reviewers to understand what they were trying to do.

The spec is here: github.com/zmarkan/whence/blob/main/SPEC.md

I'd particularly love input on the CI integration story (what policies would your team actually adopt?), the code context fields (what would make traces useful for your review workflow?), and alternative bindings (if you're on Bitbucket or GitLab, what would a native integration look like?).

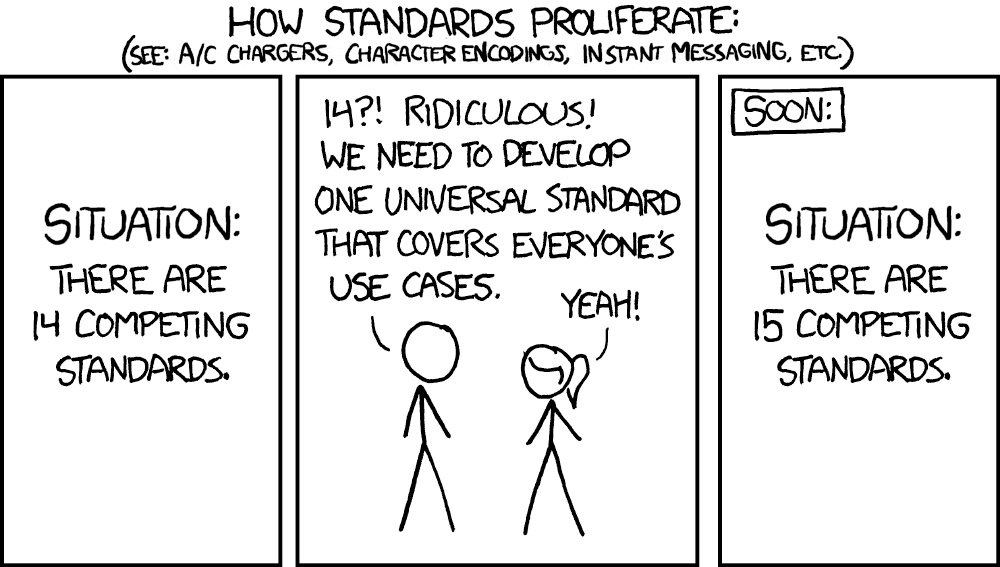

This is early-stage work on a problem I think every engineering team will need to solve. I'd rather we solve it with an open standard than with fifteen proprietary formats.